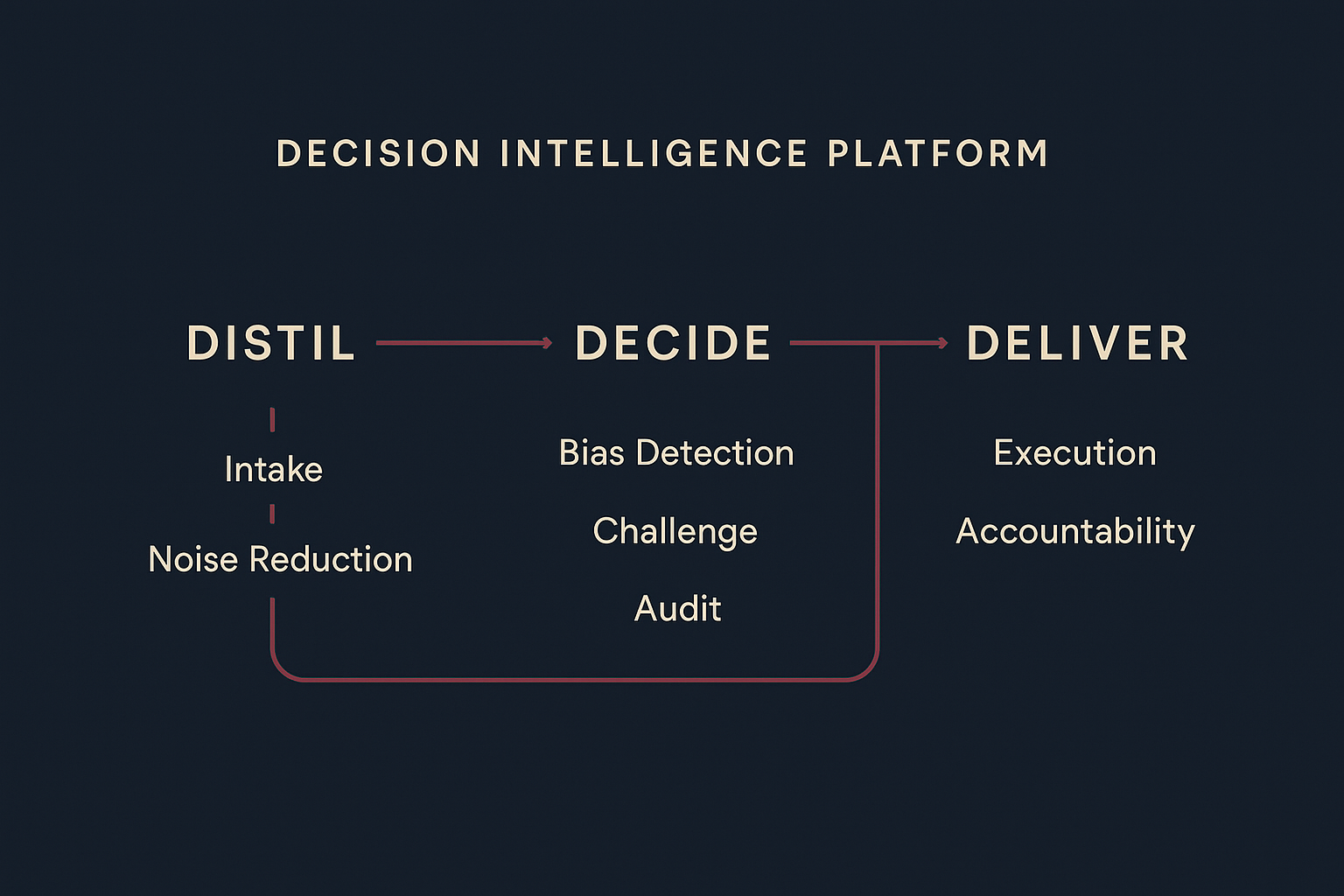

What we've built

Our own technology, not a reskin of someone else's

We don't resell other people's AI. Probity is our own technology — built because we needed it, and refined by using it every day. It has its own analysis pipeline, cognitive profiling, and integration layer.

Think Pipeline

Two-stage analysis: fast classification identifies the decision type and stakes, then deep bias detection with reframing and challenging questions. Returns a clear verdict — CLEAR, CAUTION, or STOP — before you commit. Not advice. A structured challenge.

153-Bias Cognitive Library

A comprehensive library of documented cognitive biases, organised by decision stage — from how you perceive information through to how you learn from outcomes. Integrated directly into every analysis, not bolted on as a checklist.

Decision Audit Trail

Every decision recorded as a first-class entity: what was decided, what alternatives existed, what biases were flagged, who made the call, and what happened afterwards. Immutable. Searchable. Built for the moment an auditor asks.

Cognitive Profile

Probity learns how you and your team decide over time. Which biases appear most frequently, how quickly you commit, where your outcomes track well — and where they consistently don't. The feedback loop that makes judgment improve.

Persistent Memory

Our own memory architecture that solves the stateless problem at the heart of every AI deployment. Project context, decision history, and institutional knowledge — persisted across sessions and searchable. The AI remembers what matters because we engineered it to, not because the model does it natively.

Guardrail Engine

Configurable rules that define when a human must review, when the AI can proceed, and what happens when a decision conflicts with your stated principles. Override tracking with rationale capture — every exception is recorded, never silent.

Distillation Engine

Voice notes, transcripts, documents, emails — all processed and classified automatically. Decisions, actions, risks, and commitments surfaced. Noise discarded explicitly. The signal is preserved. Everything else is gone.

Judgment Dashboard

An operational view of every decision in your pipeline — what needs judgment, what's stale, what's blocked, and what's been completed. A decision health monitor that surfaces what you're avoiding and what's drifting without a decision behind it.

Integration Layer

MCP server for AI development environments. REST API for any application. Probity works where your people already work — inside your existing AI tools, not as a separate app you have to remember to open.

AI Clarity Score

Eight honest questions that map where your organisation actually stands with AI — across four dimensions. Takes two minutes. No email required. A good place to start before committing to anything.