The August 2026 deadline has moved - here is what happened

On 26 March 2026, the European Parliament voted in plenary to support a delay to the EU AI Act's high-risk obligations. The Council of the EU reached its general approach on 13 March. Trilogue negotiations between Parliament, Council, and Commission are now in their final stage, with political agreement targeted for late April.

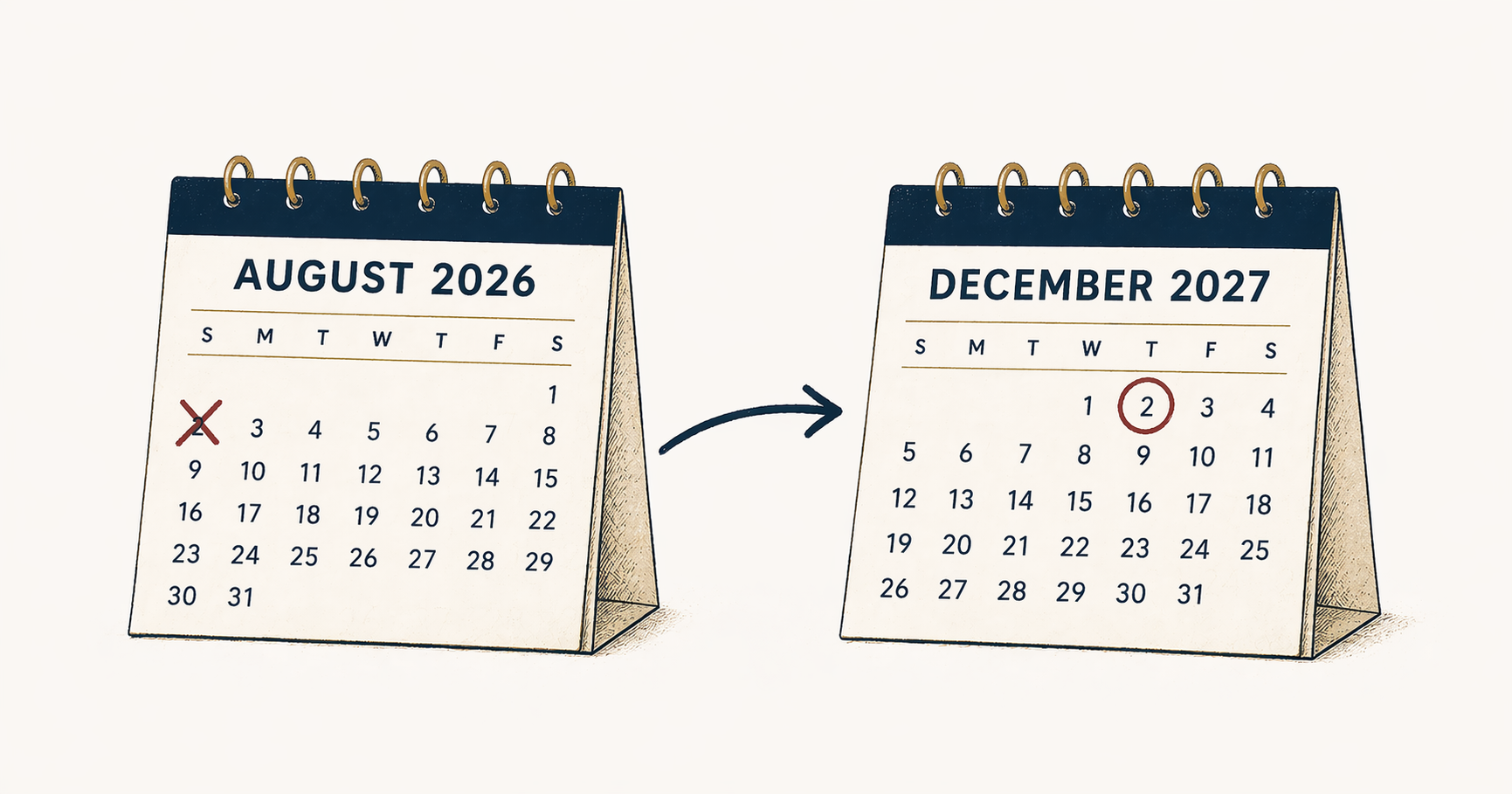

The new timeline, if adopted, looks like this. High-risk AI systems listed in Annex III - employment, credit, insurance, biometrics, education, law enforcement, critical infrastructure - move from 2 August 2026 to 2 December 2027. High-risk AI embedded in regulated products such as medical devices, machinery, lifts, and radio equipment moves to 2 August 2028. That is a 17-month extension on the deadline that sat at the centre of our March analysis.

The direction of travel is firm. The mechanism - the Digital Omnibus on AI - is not yet formally enacted, but Parliament, Council, and Commission are aligned on the outcome. Unless something unexpected breaks the trilogue, these dates will hold.

You have more time. That is the only thing that has changed.

Why the delay happened - and what it tells you

The delay is not a political concession. It is an admission that the regulatory plumbing is not ready.

Three facts make the point. Only 8 of the EU's 27 member states had designated their national contact points at the time of the Parliament vote. Harmonised standards - the technical specifications against which high-risk AI systems are meant to certify - have not been published. CEN/CENELEC working groups are still drafting. Notified body capacity, the organisations accredited to perform conformity assessments, is a fraction of what demand will require.

In other words, the infrastructure that was meant to receive compliant businesses in August does not exist. The Commission has acknowledged what practitioners have been saying for a year: asking companies to certify against standards that have not been written, through bodies that have not been accredited, under authorities that have not been designated, was never going to work.

Read the delay as a diagnosis. The ecosystem was underestimated. The regulator is buying time to finish building the machinery it needs to enforce the law it passed. That is a reason for humility about timelines, not a reason to relax about obligations.

The work that takes time inside your organisation - mapping your AI use, classifying risk, documenting data governance, building human oversight, putting logging in place - is not waiting on any of the external bottlenecks the delay addresses. It was always yours to do. It still is.

What is already in force

A common misreading of the delay is that the Act has not started yet. It has.

Prohibitions on unacceptable AI practices have been enforceable since 2 February 2025. That includes social scoring by public authorities, AI systems that exploit psychological vulnerabilities, untargeted scraping for facial recognition databases, certain real-time biometric surveillance, and emotion recognition in workplaces and educational settings. These are not rules that will apply in 2027. They applied 14 months ago.

General-purpose AI model obligations have been enforceable since 2 August 2025. Providers of foundation models above specified capability thresholds must produce technical documentation, publish training data summaries, meet copyright compliance requirements, and issue model cards. Organisations deploying these models inherit downstream transparency obligations.

The European AI Office has been operational since 2024, with cross-border oversight of general-purpose AI already active. The penalty framework, which applies fines of up to 35 million euros or 7% of global turnover for prohibited practices, is live.

If your organisation has been waiting for the Act to start, you are already 14 months behind on the provisions that matter first.

The full regulatory timeline as it now stands

For clarity, here is the timeline assuming the Digital Omnibus is agreed as proposed.

- August 2024 - The EU AI Act enters into force

- February 2025 - Prohibitions on unacceptable AI practices become enforceable

- August 2025 - General-purpose AI model obligations become enforceable

- December 2027 (proposed) - Annex III high-risk systems: employment, credit and insurance, biometric identification, education, law enforcement, migration, critical infrastructure

- August 2028 (proposed) - High-risk AI embedded in regulated products: medical devices, machinery, toys, lifts, radio equipment

The 2027 and 2028 dates are the proposed outcome of the trilogue. Until formal adoption completes, the existing August 2026 date remains the letter of the law. Planning against the new timeline is reasonable. Betting on it is not. The organisations that come through this cleanly will work to the earlier date until the later date is legally confirmed.

What "high-risk" still means - and why the category has not shrunk

The scope of Annex III has not changed. The list of high-risk categories is identical to the one businesses were preparing against six months ago. The delay moves the deadline, not the definition.

For mid-market UK businesses, the two categories that create the broadest exposure remain employment and financial decisioning. If you use AI anywhere in your hiring funnel - CV screening, interview analysis, skills assessment, workforce analytics - you are almost certainly operating a high-risk system. If AI informs credit scoring, insurance pricing, lending decisions, or benefits eligibility, the same applies.

The most common misread we encounter in client conversations is the assumption that AI embedded in third-party SaaS tools sits outside the Act's scope because someone else built it. It does not. If you deploy a system, the Act's deployer obligations apply to you. The certification bottleneck that the delay is trying to solve is a provider problem. The inventory, risk classification, and governance work is yours.

The governance gap that time does not close

This is the point that matters.

The compliance work that genuine analysis puts at 8 to 14 months is not work that external standards or notified body capacity can do for you. Mapping every AI system in your organisation - including the ones embedded in HR software, customer support tools, sales platforms, code pipelines, and logistics systems - is internal work. Classifying each system's risk tier is internal work. Documenting training data provenance, bias testing, and model performance is internal work. Naming human accountability for each high-risk system is internal work. Retrofitting logging to produce auditable decision trails is internal work.

None of this is blocked by the Commission's timeline. None of it accelerates because a harmonised standard is published. It happens on your clock, in your organisation, with your people.

The businesses that used August 2026 as a reason to start compliance work are 17 months ahead of the businesses that used it as a reason to wait. The regulator changed the date. The organisations that chose to stay unready chose to stay unready.

An organisation that has not mapped its AI use by December 2027 will be in the same position as an organisation that had not mapped its AI use by August 2026 - except it had 17 more months of regulatory cover and declined to use them. The governance infrastructure is identical whether the deadline is four months away or 20 months away. The work does not happen faster if you start later.

What AI readiness actually looks like

If the calendar is now less useful as a forcing function, the test shifts to a set of questions. A genuinely ready organisation can answer all of these without calling a meeting.

- Can you produce a complete inventory of every AI system in your organisation, including the AI embedded in third-party tools and services?

- For each AI system on that inventory, can you state whether it falls within a high-risk category, and on what basis?

- For each high-risk system, is there a named individual accountable for its outputs - not a team, a role, or a committee, but a person?

- Do your AI systems produce logs of their inputs, outputs, and decision logic, retained for an auditable period?

- Have you asked your key vendors what AI they have deployed in the services you buy, and what compliance obligations that creates for you?

- Does your AI documentation reflect what your systems actually do now, rather than what they were originally specified to do?

- Can your board articulate your AI risk exposure without calling IT?

The last question is the one that sorts the ready from the rest. Most boards cannot answer it, and in many organisations no one has ever been asked to prepare them to. That is not a compliance risk in isolation. It is a governance gap that was there before the regulation and will be there after it.

The risk that is not moving

The regulatory deadline has moved. The insurance market has not.

Verisk, the largest insurance policy forms provider in the United States, introduced AI liability exclusions in January 2026 that are now spreading through the UK and European markets. WR Berkley proposed exclusions that bar claims tied to "any actual or alleged use" of AI. Mosaic Insurance declined to underwrite large language model risks altogether. These are commercial underwriting decisions responding to AI liability risk. They are unaffected by the legislative timeline in Brussels.

An organisation that reads the delay as permission to wait may find itself in an awkward position: with more time on the regulatory deadline and less time before its insurer excludes the coverage it was relying on. The question we posed in March is still open. Ask your broker this week whether your general liability and professional indemnity policies explicitly cover or exclude AI-related claims. If they cannot tell you, that is your answer.

Where to start if you have not started

If the delay has given your leadership team a reason to re-engage rather than relax, the starting point is the same as it was last month.

Begin with an AI inventory. It is the one step you can take immediately, it is fully within your control, and it defines the scope of everything else. A mid-market business typically completes an initial inventory in two to four weeks. Until you have it, every other compliance decision is a guess.

Extend the governance you already have. Most organisations already hold privacy policies, data processing records, and information security policies. AI governance extends these rather than replacing them. If you hold ISO 27001, the path to ISO/IEC 42001:2023 is the shortest route to a defensible framework.

Prioritise your highest-exposure systems first. Employment tools and financial decisioning systems are where the Act's requirements are most prescriptive, where reputational harm compounds fastest, and where insurers are moving hardest. Start there.

If you want a structured entry point, our Discovery Session is a free two-hour working session that maps your AI use, identifies your risk areas, and defines your practical next steps. It is the fastest way to convert the 17 months the regulator just gave you into governance you can actually rely on.

The delay gave you more time to get this right. It did not give you a reason not to.

Rubicon Software helps UK businesses navigate AI adoption with governance built in from the start. If you need to assess your EU AI Act exposure, book a Discovery Session or read our March 2026 analysis for the underlying compliance detail.